Choose your graphic experience

A table below helps you to check whether your computer is powerful enough to run VR. There is still an affordable option that fits minimum requirements. And for the best immersive experience you can choose recommended configuration.

VR HMD

HTC Vive Pro

Oculus Rift S

Oculus Quest

Oculus Rift S

Oculus Quest

GPU

Nvidia GeForce RTX 3080

CPU

AMD Ryzen 7 3800XT

Intel Core i7-10700K

Intel Core i7-10700K

Memory

16 GB

Storage

40 GB

OS

Windows 10

shows

Immersive virtual shows

Discover music in fantastical sites well outside the confines of everyday existence. David Guetta, Steve Aoki, Charlotte de Witte and more iconic artists and virtual DJs deliver next-level performances in PRISM World.

See Shows

store

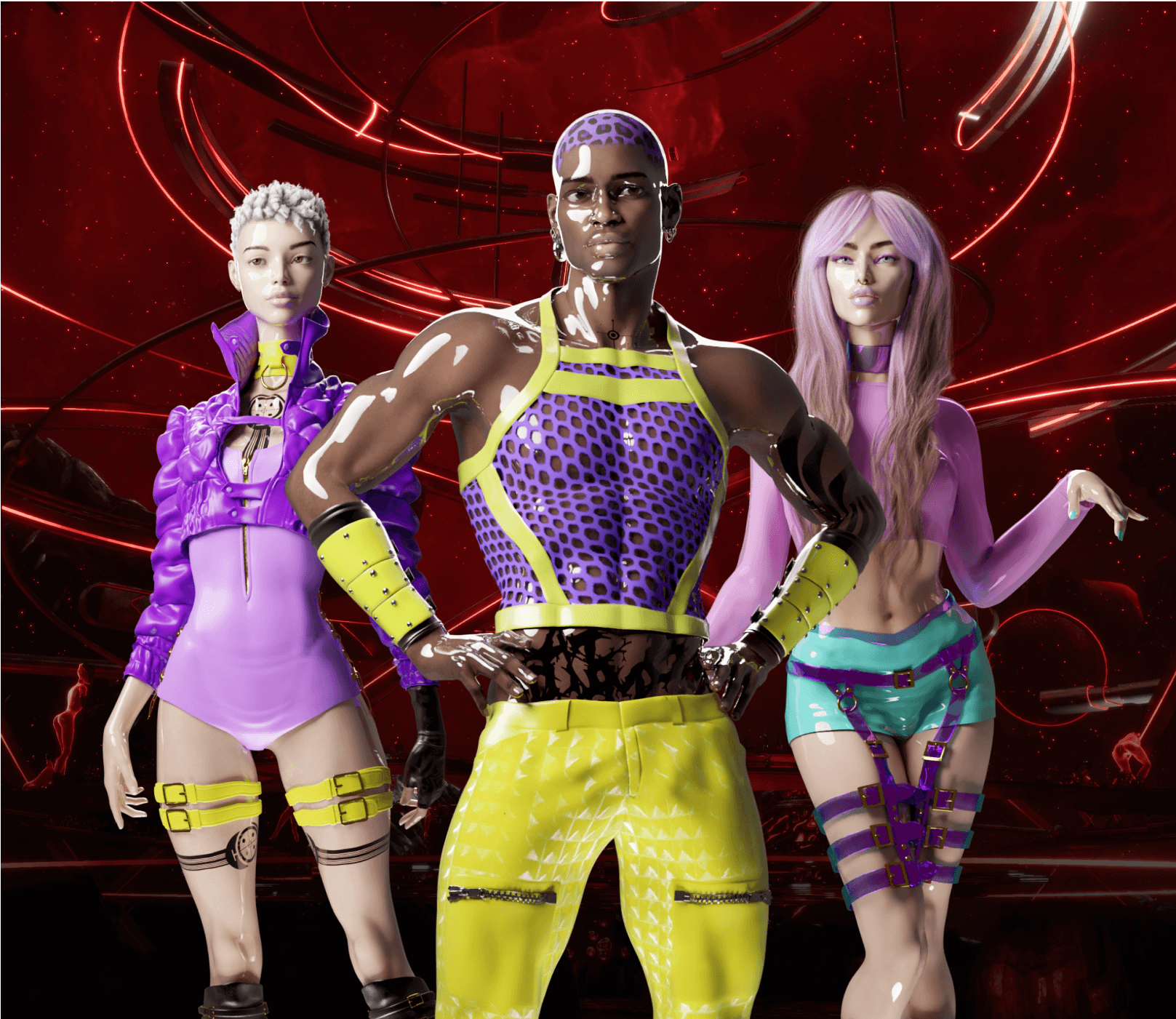

Digital Alter Ego

Who would you like to be in the metaverse of Sensorium Galaxy? The possibilities of your choice are endless so the number of virtual beings is. New models, different styles, wide upgrade options – go unique with all these features in the store.

Choose Your LookFrequently Asked Questions

It is ok to question this new type of reality. Find the most useful information about the metaverse of Sensorium Galaxy in Q&A bellow. In case you didn’t find the right answer, please, contact support.

Does Sensorium Galaxy require VR equipment?

Sensorium Galaxy is a global Social VR platform accessible on Desktop PC and PCVR. Its cross-play functionality ensures that Desktop and VR users can seamlessly explore virtual worlds and attend events together.

Can I use your product without any in-app purchases?

Absolutely! Sensorium Galaxy operates on a “free-to-play” model. With no in-app purchases, users can explore virtual worlds, engage with Virtual Beings, enjoy Virtual DJ performances, and much more.

Are in-app purchases refundable?

All in-app purchases are final and non-refundable. Please mind it before purchasing within the app.

What payment platform facilitates in-app purchases?

Sensorium Galaxy primarily utilizes Stripe for the in-app payment processing.